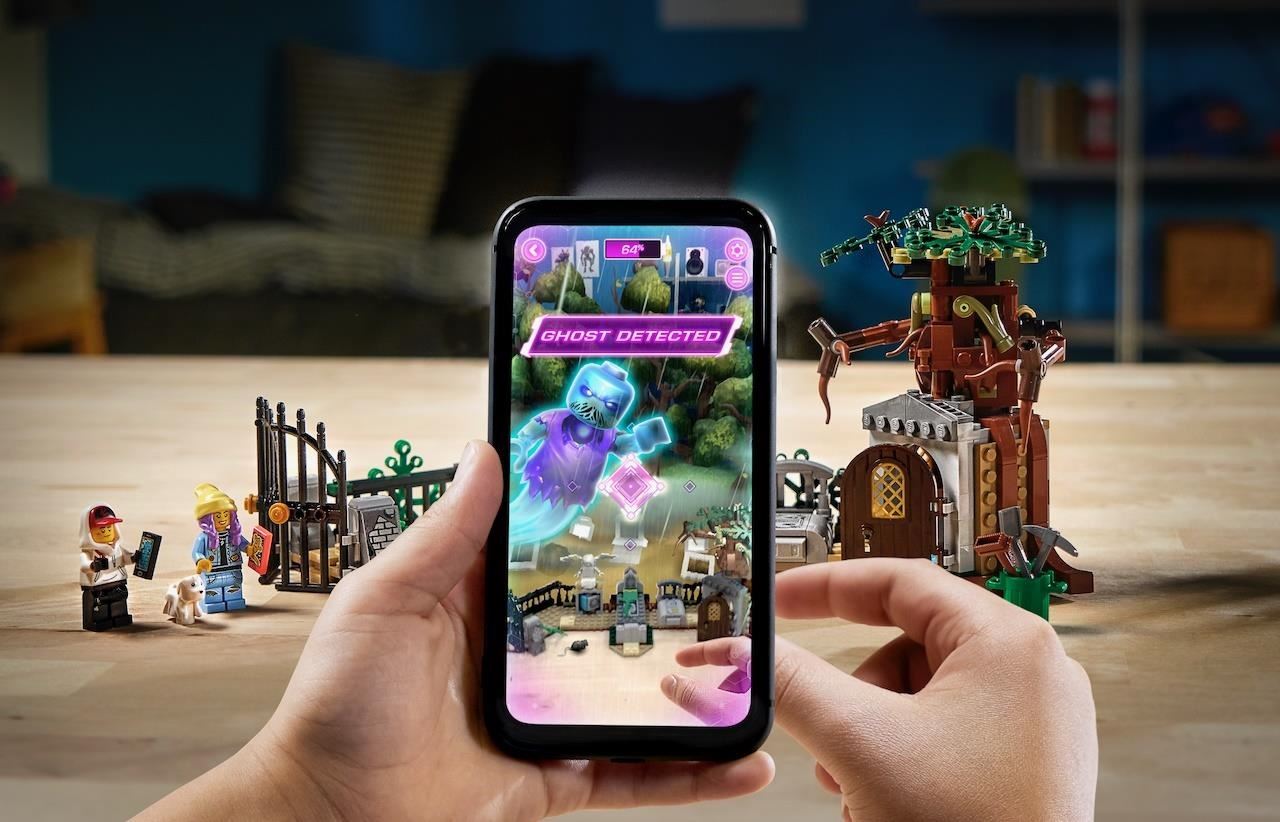

In this age of technology, augmented reality isn’t something people are unfamiliar with. You probably know some examples of augmented reality apps like Snapchat and Pokemon Go. While AR technology empowers these apps, there are lots of new augmented reality examples that you should know about.

But before we go through all of them, let’s first discuss what augmented reality is together with its types.

What Is Augmented Reality?

Augmented reality or AR is the result of using technology to enhance objects from the real-world environment such as sounds, images, and texts. It alters one’s perception of reality by seamlessly weaving computer-generated perceptual information with the physical world. As augmented reality matures, the numbers of different applications continue to grow.

Types Of Augmented Reality

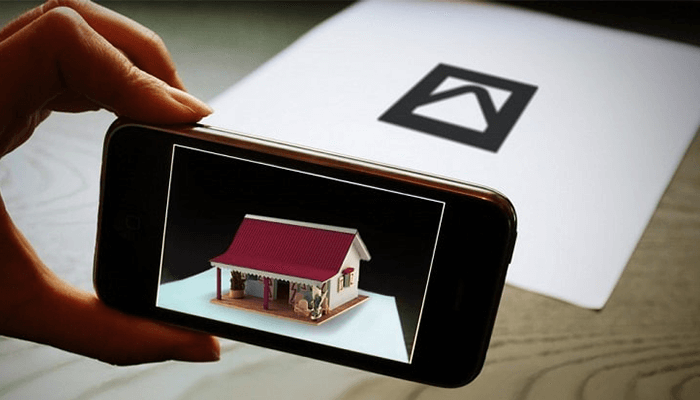

Marker-Based Augmented Reality

The marker-based augmented reality also called as image recognition, uses a type of visual marker and a camera to generate results only when the camera detects the marker. Maker-based applications use distinct but simple patterns (such as QR codes) as markers so that they can be easily recognized.

Markerless Augmented Reality

This type uses GPS, digital compass, velocity meter, or accelerometer, which are embedded on your device to provide data on your location. This is commonly used for mapping directions, finding nearby businesses, and other applications that look for a specific location.

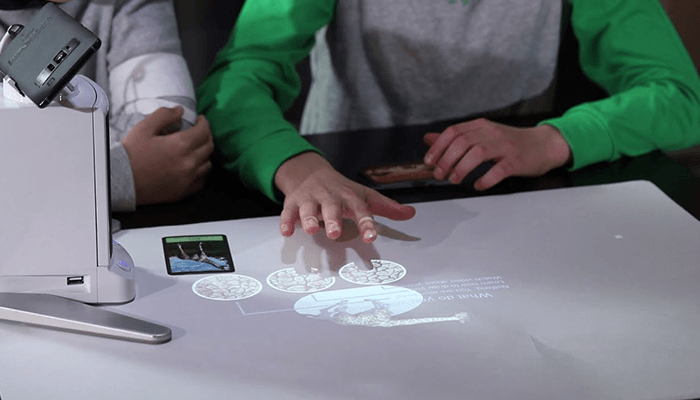

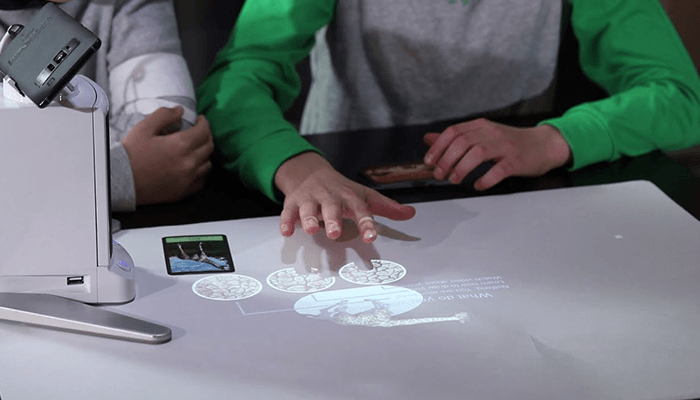

Projection-Based Augmented Reality

It is a type of augmented reality that works by projecting artificial light on real-world surfaces. An application under this type allows human interaction by sending light on any real-world surface and then sensing human application. It also utilizes laser-plasma technology to project a 3D hologram.

Superimposition Augmented Reality

Superimposition augmented reality either partially or fully replaces the original view of an object or space with an augmented view of the same object. Object recognition is a vital role for this type. This is because an application would not be able to replace the original view with an augmented one if it can’t determine what the object is.

AR has been used widely in different functions from retail, construction and maintenance, tourism, education, entertainment, and even healthcare. Different platforms such as web, smartphone application, and catalogs are using it. That’s why we have provided ten cool augmented reality examples you should know about.

Examples Of Augmented Reality

Project Color App From Home Depot

One of the hardest things in designing a room is deciding which paint color to use. Will your furniture match the painting on your wall? Does the color overpower the rest of the room? These are just of the dilemmas homeowners face and most of the time, deciding which paint color prolong finishing a house construction or renovation.

Good thing, Home Depot has come up with the solution by creating Project Color App. What makes this app cool is it holds the integrity of the room’s dimension as you try different paint colors in your space, painting around objects and acknowledging shadows and lighting conditions in the room. This only means that you will get a real-life visual of how the paint will look.

BMW Augmented

BMW has launched an app wherein customers can use their smartphone or tablet to bring their catalog to life. Just simply hold your device to selected images. It will then transform each page into an interactive product experience. You can see 3D models, interactive product information, videos, high-resolution images, and other information.

With this augmented reality catalog, you can dive into X-ray views of components, try out variations of LEDs and laser lights, and explore 360° interior views. To fully experience the catalog, you need to download the application which is available in both App Store and Google Play.

AR Poser

Disney has launched an application which allows you to take a selfie with the Disney digital avatars imitating your pose. The number of poses and shapes stored in its program library is currently limited. However, this is a good example of how augmented reality can add more fun to the entertainment industry.

Although AR poser is in its initial stages, the application has the potential to be a game-changer in picture modification and AR photo-taking processes. Currently, the whole process of interpreting a pose to project the Disney avatar takes for almost two seconds. Soon, it will have a wide range of supported devices including smartphones.

Google’s Measure

Google has offered a lot of promising helpful applications, but this one surely is something not a lot of people have expected. By simply pointing your phone’s camera at any given item, you can easily get its measurement. Pretty cool, right? And you can get readings on either metric or imperial system plus you can also take a photo of it.

This example of augmented reality app is free to download on any Android smartphone that supports Google’s ARCore platform. It uses ARCore’s spatial features to measure real-world objects. We all know that ARCore isn’t perfect, so we wouldn’t recommend relying on this app for important measurements.

Sephora’s Virtual Artist

Rejoice make-up fans! This cool augmented reality app lets you try on make-up without the hassle of going through the physical store. The app lets users try out their products using AR technology so you can get the best 3D live experience. Its innovative feature helps you find the make-up product that best-suited you.

Sephora’s virtual artists not only let you try different products. However, it also makes you inspired by the looks created by Sephora experts. You can also learn new techniques with step-by-step tutorials customized to your face. It is also one of the greatest augmented reality website examples which you can find here.

AccuVein

The augmented reality technology has taken over the healthcare industry with one of this revolutionary app, AccuVein. AR makes it significantly easier for physicians and clinicians to find a patient’s vein on the first try of a blood draw or IV insertion. It also enhances patient experience and satisfaction.

AccuVein uses project-based augmented reality. It uses a combination o laser-based scanner, processing system, and digital laser projection all in a portable handheld device. Its users can view a virtual real-time image of the arrangement of blood vessels on the surface of the skin.

IKEA Place Mobile App

One of the most talked-about AR examples is the IKEA mobile app. It is because this Swedish home furnishing company is a pioneer in making use of the augmented reality technology to enhance the customer experience in retail. The app lets you see how exactly a furniture item would look and fit in your home.

IKEA Place lets users place true-to-scale 3D furniture in your home using your very own iPhone camera. Not only this, the products’ measurements are accurate down to a millimeter so you don’t have to worry if the size would fit your room. This sure will help make life better for the many.

City Guide Tour

Definitely an example of a cool augmented reality! This app lets users point to different locations or objects and get historic details regarding the point. Aside from its historical details, it also shows description, opening hours, and admission fee of different establishments.

There are different cities available on the app. If your city is not on the app, you have an option to be a city guide. Super cool! This is currently available on the App Store and soon on Google Play. This is sure one example of augmented reality you should know.

Zombies Everywhere

Heads up, gamers! Forget about catching Pokemons when you’ll be able to fight off zombies along the way. This is more than your regular shoot-zombies-in-your-face game. It uses augmented reality to put zombies in your surroundings. You just need to look through your iPhone or iPad as if you’re taking a photo.

What’s cool about this is your movement controls the game. Whether you’re taking a walk or just sitting in a cafe, all you have to do is open the app and simply look around and kill the zombies. It has really good 3D graphics that you’ll surely enjoy.

These cool examples of AR can add value and enhance user experience in a different application. There are a lot of new augmented reality examples that have been around, which means increasing investments that will drive more growth.